How to Copy Directories in HDFS Using hdfs dfs -cp

The Hadoop Distributed File System (HDFS) is a powerful distributed file system designed for storing massive amounts of data. A fundamental operation in HDFS is copying files and directories. In this article, we'll explore how to use the hdfs dfs -cp command for efficiently copying directories in HDFS.

Understanding hdfs dfs -cp

The hdfs dfs -cp command is a versatile tool for copying files and directories within HDFS. It allows you to:

- Copy a single file:

hdfs dfs -cp <source_path> <destination_path> - Copy multiple files:

hdfs dfs -cp <source_path1> <source_path2> ... <destination_path> - Copy an entire directory:

hdfs dfs -cp -r <source_directory> <destination_directory>

Key Concepts

- Source Path: The location of the file or directory you want to copy.

- Destination Path: The location where you want the copied data to be placed.

- -r (Recursive): This flag is essential for copying directories. It tells

hdfs dfs -cpto recursively copy all files and subdirectories within the source directory.

Copying Directories: A Step-by-Step Guide

-

Connect to HDFS: Before executing any commands, you need to connect to the HDFS cluster. This is typically done using the

hadoop fscommand in a shell session. For example:hadoop fs -ls / -

Specify Source and Destination Paths: Identify the source directory you want to copy and the destination directory where you want the copy to reside. Make sure both paths are valid HDFS paths.

-

Execute the

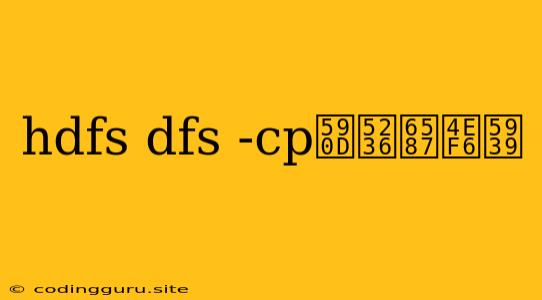

hdfs dfs -cpCommand: Use the following command structure to copy a directory:hdfs dfs -cp -rReplace

<source_directory>and<destination_directory>with your actual HDFS paths. -

Verify the Copy: After the command completes, use the

hdfs dfs -lscommand to verify that the directory and its contents have been successfully copied to the destination location.

Example: Copying the "data" Directory

Let's say you have a directory called "data" located at /user/your_username/data in HDFS. You want to create a copy of this directory at /user/your_username/backup_data. Here's how you'd use hdfs dfs -cp:

hdfs dfs -cp -r /user/your_username/data /user/your_username/backup_data

Important Considerations

- Permissions: Ensure you have the necessary permissions to write to the destination directory in HDFS.

- Existing Data: If a directory with the same name already exists at the destination path, the

hdfs dfs -cpcommand will overwrite it. Use caution to avoid accidental data loss. - Performance: Copying large directories might take some time depending on the size of the data and the network bandwidth available.

Conclusion

The hdfs dfs -cp command is a crucial tool for managing data within HDFS. Understanding how to use it effectively allows you to easily copy files and directories, ensuring data redundancy and backup strategies. Remember to use the -r flag when copying directories and be aware of the potential for data overwriting. With proper planning and execution, copying directories in HDFS becomes a streamlined process.